Making artificial intelligence (AI) more inclusive is one of the most compelling issues of our time. Inclusive AI means effective AI because diverse communities around the world can benefit from it. Inclusive AI is more just and mindful, too. Businesses that develop AI-based products and services are responding. A case in point: Google.

Google Introduces a More Inclusive Approach to AI

At Google’s annual developer conference (known as Google I/O), the company announced on May 11 some important ways that it’s making AI more inclusive. They include:

Improving Skin Tone Representation in Google Products

In partnership with Harvard professor and sociologist Dr. Ellis Monk, Google is releasing a new skin tone scale designed to be more inclusive of the spectrum of skin tones people see in society.

Dr. Monk has been studying how skin tone and colorism affect people’s lives for more than 10 years. The culmination of Dr. Monk’s research is the Monk Skin Tone (MST) Scale. This is a 10-shade scale that Google will incorporate into various Google products over the coming months. Google is also openly releasing the scale so anyone can use it for research and product development.

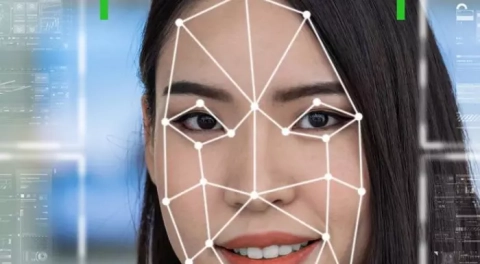

Google says that its goal is to support inclusive products and research across the industry. So, how does this affect AI? One example, according to Google: computer vision, a type of AI that allows computers to see and understand images. When not built and tested intentionally to include a broad range of skin-tones, computer vision systems have been found to underperform for people with darker skin.

The idea is for the MST Scale to help Google build more representative data sets so that Google can train and evaluate AI models for fairness and inclusive representations of skin tones.

One notable application of this more approach: Google Search, which is easily the most popular way people navigate the web. In our visually oriented society, people rely on image-based searches more and more often.

Google is using the MST Scale to make visual search more inclusive. Google shared the example of people searching for make-up products and options by using visual search. A more inclusive search makes it possible for people to refine images by skin tone. So, for example, if you’re looking for “everyday eyeshadow” or looks” you’ll more easily find results that work better for your needs – in other words, not only inclusive, but more personal.

As Google noted in a blog post,

Seeing yourself represented in results can be key to finding information that’s truly relevant and useful, which is why we’re also rolling out improvements to show a greater range of skin tones in image results for broad searches about people, or ones where people show up in the results. In the future, we’ll incorporate the MST Scale to better detect and rank images to include a broader range of results, so everyone can find what they’re looking for.

It’s notable that Google will openly release the Monk Skin Tone Scale so that others can use it in their own products and give Google feedback on how to improve. In 2021, Google caught some blowback after the company shared a preview of an AI-powered dermatology assist tool intended to help people understand what’s going on with issues related to their skin, hair, and nails. Google was criticized for not being inclusive enough in its data set. By opening up the Monk Skin Tone Scale for open, community-based feedback, Google appears to be tackling this issue head-on.

A More Inclusive Google Translate

Google is also making Google Translate more inclusive. That’s because the online tool has learned 24 new languages. Google Translate now supports more than 133 languages globally.

In all, more than 300 million people speak the newly included languages. The new languages include Assamese (used by about 25 million people in Northeast India); Bhojpuri (used by about 50 million people in northern India, Nepal and Fiji); Luganda (used by about 20 million people in Uganda and Rwanda) and many more. (See a complete list here.)

What’s especially intriguing about this development is the role of machine learning. This update marks the first time Google has used Zero-Shot Machine Translation. This is a machine learning model that only sees monolingual text — meaning, it learns to translate into another language without ever seeing an example. Zero-Shot Machine Translation is an example of the application of synthetic data, which consists of data generated with the assistance of AI. Synthetic data is based on a set of real data. After being fed real data, a computer simulation or algorithm generates synthetic data to train an AI model. We define synthetic data and some of its applications in this blog post and this one.

Crucially, Google says it is taking an inclusive approach in gathering the data needed for Zero-Shot Machine Translation to work effectively. This includes collaborating with native speakers, professors and linguists.

Mindful AI Matters

The latest developments from Google I/O underscore the need for Mindful AI, or AI that is more valuable, trustworthy, and inclusive. We define Mindful AI as follows: developing AI-based products that put the needs of people first. Mindful AI considers especially the emotional wants and needs of all people for which an AI product is designed – not just a privileged few. When businesses practice Mindful AI, they develop AI-based products are more relevant and useful to all the people they serve.

AI that serves only a small segment of the population is limited in its relevance and usefulness. This is why businesses are paying more attention to capabilities such as AI localization, defined as training AI-based products and services to adapt to local cultures and languages. A voice-based product, e-commerce site, or streaming service must understand the differences between Canadian French and French; or that in China, red is considered to be an attractive color because it symbolizes good luck. AI-based products and services don’t know these things unless people train them using fair, unbiased, and locally relevant data. And an AI engine requires even more data at a far greater scale. (For example, for one of our clients, OneForma by Centific delivered 30 million words of translation within eight weeks.) Consequently, more people are needed to train AI to deliver a better result.

Mindful AI is both right and sensible. For instance, drugs and vaccines based on a more diverse global set can be more effective in fighting health problems at a more global scale – among them the current COVID-19 pandemic.

Mindful AI does not happen without having people in the loop to train AI-powered applications with data that is unbiased and inclusive. For example, at OneForma, we help our clients develop localized AI-based products for different cultures by relying on globally crowdsourced resources who possess in-market subject matter expertise, mastery of 200+ languages, and insight into local forms of expressions such as emoji on different social apps.

Contact Centific

Mindful AI is not a solution. It’s an approach. There is no magic bullet or wand that will make AI more responsible and trustworthy. AI will always be evolving by its very nature. But Mindful AI takes the guesswork out of the process. Contact Centific to get started.

Photo source: https://pixabay.com/illustrations/diversity-people-heads-humans-5582454/